The “Energy Wall” of 2025: Why AI’s Biggest Rival Isn’t Regulation—It’s the Grid

A Defining Admission

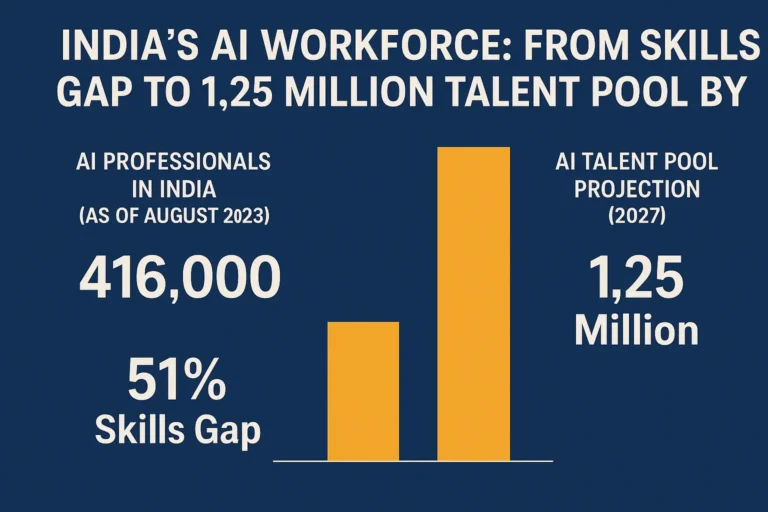

This remark, “It’s not a compute glut; it’s a power gap”, by Satya Nadella, CEO of Microsoft, may ultimately be remembered as the sentence that defined artificial intelligence in late 2025. For nearly a decade, the dominant narrative around AI bottlenecks focused on data availability, advanced chips, talent shortages, and regulatory uncertainty. In 2025, however, a far more fundamental constraint moved to the centre of the conversation: electricity and power grid capacity.

Artificial intelligence has run headfirst into what industry insiders now describe as the Energy Wall—a hard physical limit where ambition, capital, and compute collide with the realities of aging and overstretched power infrastructure.

The $400 Billion Paradox: Capital Without Kilowatts

In 2025 alone, hyperscalers such as Google, Meta, Amazon, and Microsoft are projected to invest nearly US$400 billion in AI infrastructure, cloud expansion, and advanced semiconductors.

Yet despite this unprecedented spending spree, AI expansion is beginning to slow—not because of regulatory barriers or capital constraints, but due to power availability.

The emerging reality is stark:

- Data centers in parts of the US and Europe face grid connection wait times of up to seven years

- Utilities are imposing moratoriums on new high-density compute facilities

- AI data centers now compete directly with EV charging networks and green hydrogen projects for electricity

In short, capital is abundant. Megawatts are not.

AI’s Explosive Energy Appetite

Modern artificial intelligence—particularly large language models (LLMs)—is inherently energy-intensive.

Training a frontier-scale model typically requires:

- Tens of thousands of GPUs running continuously for weeks

- Massive cooling infrastructure, often consuming as much power as computation itself

- Always-on inference systems serving billions of daily queries across global markets

Key Stat:

By 2025, global AI data centre energy consumption is estimated to rival the carbon footprint of entire metropolitan areas such as New York City.

This surge has caught grid operators off guard. Power systems built for predictable industrial and residential loads are struggling to absorb AI’s sudden, concentrated demand spikes.

Regulation Stepped Aside—Physics Took Over

Until recently, policymakers and industry leaders believed AI regulation would be the primary brake on innovation. Instead, the market has encountered a far more unforgiving constraint: physics.

Power grids:

- Were designed decades ago for steady, predictable demand

- Require years to upgrade transmission lines and substations

- Face environmental, land-acquisition, and political approval delays

Even with fast-track permissions, meaningful grid expansion typically takes five to ten years. AI development cycles, by contrast, operate on timelines measured in months.

The result is a growing mismatch between digital ambition and physical infrastructure.

The Physical Pivot: From Bigger Models to Smarter Ones

This tension has triggered what The Quantiq identifies as a Physical Pivot in AI strategy.

Rather than scaling ever-larger, centralized models, companies are shifting toward energy-efficient intelligence.

The new direction:

- Small Language Models (SLMs) optimized for specific tasks

- On-premise and on-device AI deployments

- Edge AI in manufacturing, healthcare, agriculture, and mobility

SLMs:

- Consume a fraction of the power of large general models

- Reduce dependence on hyperscale data centers

- Enable faster, more private, and more reliable AI systems

This is not a retreat from AI capability—it is a strategic evolution driven by physical limits.

Energy Becomes a Boardroom Metric

Electricity is no longer a background cost in AI economics. It has become a core strategic variable.

Executives are now asking:

- How much value does each kilowatt-hour of AI generate?

- Is marginal model accuracy worth exponential energy cost?

- Can inference be localized to reduce transmission and cooling losses?

In this environment, energy efficiency is competitive advantage. The most valuable AI systems of the next decade may not be the largest—but the most efficient per watt.

Climate Commitments Under Strain

Hyperscalers have publicly pledged:

- Net-zero emissions

- 100% renewable energy sourcing

- Water-positive data center operations

But the Energy Wall complicates these ambitions.

Renewable energy is:

- Intermittent

- Often distant from data center clusters

- Dependent on storage solutions still expensive at scale

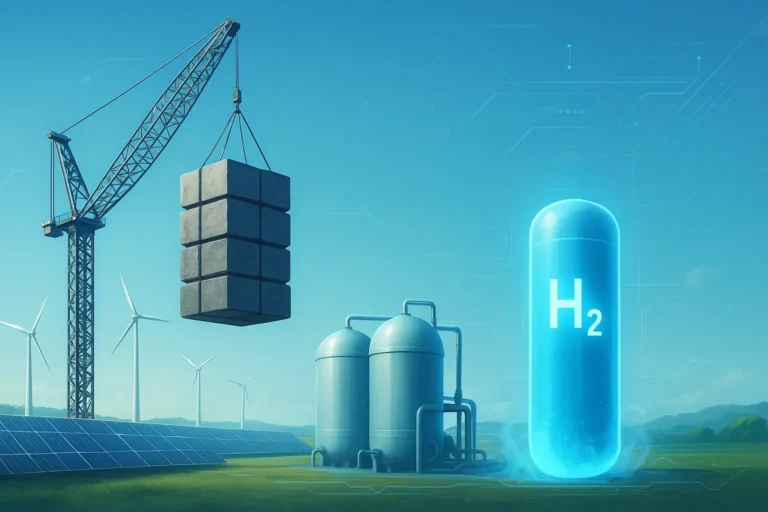

As AI demand accelerates faster than renewable deployment, companies are exploring direct investment in power generation—including nuclear, small modular reactors (SMRs), and captive solar and wind farms.

Big Tech is no longer just a consumer of energy. It is becoming an energy planner.

Implications for Startups, Governments, and Emerging Markets

For startups

- Energy-efficient AI is moving from niche to necessity

- Vertical, domain-specific AI gains an edge over general-purpose systems

For governments

- AI competitiveness now depends on grid modernization

- Energy policy is becoming AI policy

For emerging markets

- Localized, efficient AI deployments enable leapfrogging

- Massive hyperscale infrastructure is no longer the only path to relevance

This shift opens new opportunities for regions previously constrained by limited power infrastructure.

The Quantiq View: Intelligence Will Follow Power

The AI race of the early 2020s was about scale.

The AI race of the late 2020s will be about efficiency, sustainability, and physical realism.

The Energy Wall is not a temporary hurdle. It is a structural constraint reshaping:

- Model architectures

- Investment strategies

- Climate commitments

- Global AI geopolitics

The future of artificial intelligence belongs not to the biggest models—but to the most energy-intelligent ones.

One Comment