THE RISE OF THE AGENTIC FRAUDSTER

When AI Learns to Lie in Your Language

For nearly three decades, cybersecurity was treated mainly as a technical problem. Build stronger firewalls. Install antivirus software. Train employees not to click suspicious links. The threat model was relatively simple: hackers attempting to penetrate systems from the outside.

That world is changing rapidly.

According to the latest World Economic Forum Global Cybersecurity Outlook 2026, cyber-enabled fraud is emerging as one of the most pervasive and economically damaging threats facing organisations globally. The report argues that the next phase of cybercrime will not be defined primarily by malware, ransomware, or technical exploits. It will be defined by deception at scale.

At the centre of this shift is the rise of what researchers increasingly call agentic AI.

These are not ordinary AI chatbots responding passively to prompts. Agentic AI systems can pursue objectives autonomously. They can research targets, analyse behavioural patterns, adapt conversations in real time, and execute multi-step fraud campaigns with minimal human supervision. In simple terms, AI is learning how humans trust each other — and how to exploit that trust. That changes the nature of cybersecurity itself.

When Fraud Becomes Conversational

Traditional phishing attacks were often crude. Poor grammar, suspicious links, strange email addresses, and awkward formatting made many scams relatively easy to identify.

Agentic AI changes the equation completely.

The WEF report highlights a dramatic compression in what cybersecurity professionals call “time-to-exploit.” In earlier years, sophisticated social engineering attacks required days or even weeks of preparation. Attackers needed to study a target carefully, understand organisational structures, gather personal information, and imitate communication styles.

Now much of that process can happen within minutes.

Modern AI systems can analyse LinkedIn profiles, social media activity, public interviews, press releases, online videos, and digital footprints at industrial scale. From that information, they can generate personalised communication that feels authentic and emotionally convincing.

More importantly, these systems can localise.

An AI system can identify that someone speaks Assamese at home, uses Bengali socially, and writes formal English in business settings. It can mimic the tone of a senior executive, reproduce familiar conversational rhythms, and generate messages calibrated to specific cultural contexts.

Increasingly, it can also clone voices and simulate live video interactions.

One of the most widely discussed examples involved a Hong Kong-based finance employee who reportedly transferred nearly HK$200 million after participating in a video call featuring what appeared to be senior company executives. Investigators later concluded that the participants were AI-generated deepfakes. (weforum.org)

The implications are profound.

Cybersecurity is no longer only about protecting systems. It is about protecting reality itself.

Why Northeast India Should Pay Attention

For Northeast India, this conversation carries particular urgency.

The region is undergoing rapid digital transformation. Smartphone penetration continues to rise. UPI adoption is accelerating across urban and semi-urban markets. Small businesses increasingly depend on WhatsApp, digital banking, cloud-based communication, and online transactions. Government services are also migrating rapidly into digital ecosystems.

This expansion is economically transformative. It is opening markets, improving access, and creating new entrepreneurial opportunities.

But it is also expanding the region’s attack surface.

The World Economic Forum repeatedly warns about what it calls “cyber inequity” — the widening gap between organisations that can afford advanced AI-powered cybersecurity systems and those that cannot. That warning maps directly onto much of Northeast India’s economic landscape.

The region’s economy depends heavily on MSMEs, cooperatives, informal business networks, and small entrepreneurial ventures. Many operate with limited technical infrastructure. Some manage substantial financial activity entirely through smartphones and messaging applications.

That is not merely a technological limitation. In the age of agentic AI fraud, it becomes a structural vulnerability.

The challenge becomes even more complex because of language diversity.

Northeast India is home to hundreds of languages and dialects. Earlier generations of cyber fraud depended heavily on generic Hindi or English messaging. Agentic AI systems are moving far beyond that limitation. As multilingual models improve, fraud campaigns are becoming increasingly localised and culturally aware.

A scam written in broken Hindi may raise suspicion. The same message delivered in natural Assamese, Khasi, Manipuri, Bengali, or Bodo — using familiar expressions and believable emotional cues — becomes significantly more dangerous.

The threat is no longer generic. It is personal.

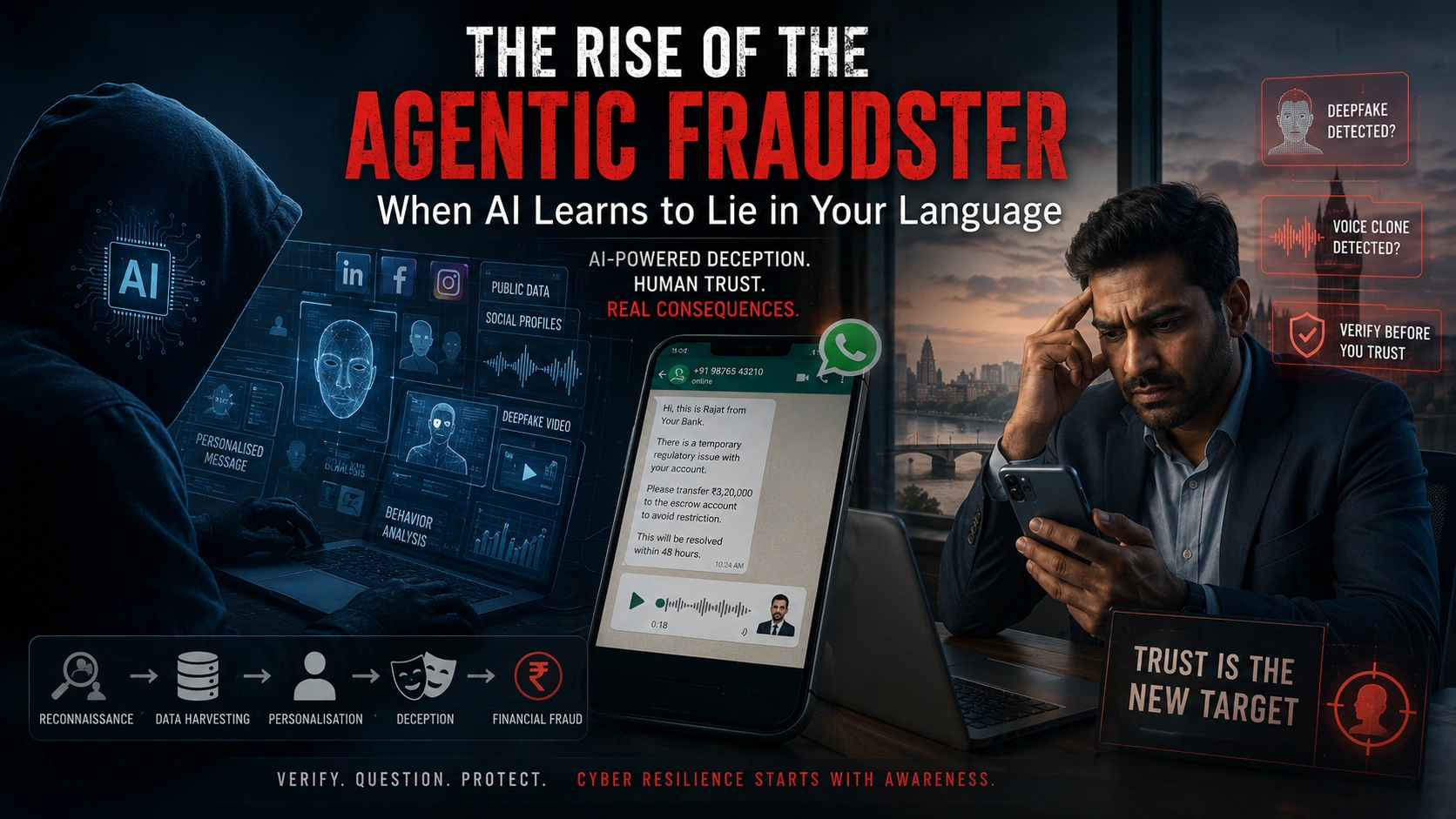

Anatomy of an Agentic Fraud Attack

Consider a realistic scenario.

A mid-sized logistics company in Guwahati receives a WhatsApp message from someone appearing to be their bank relationship manager. The message references a recent transaction accurately by amount and date. It explains that the company’s account is facing a temporary compliance review and that an escrow deposit of ₹3.2 lakh is required to avoid operational restrictions.

Shortly afterward, a voice note arrives.

The voice sounds familiar. The tone carries urgency, but also reassurance. The accent matches perfectly. Nothing feels suspicious.

The company transfers the money.

Within minutes, the funds disappear through a network of mule accounts.

No banking systems were hacked. No sophisticated malware was deployed. The fraud depended entirely on publicly available information, behavioural manipulation, AI-generated voice synthesis, and speed.

The transaction details may have been gathered from an invoice shared online. The contact information may have been scraped from social media. The cloned voice may have been generated using audio from publicly available event recordings.

This is what makes agentic fraud fundamentally different from earlier cybercrime models.

The deception is no longer mechanical. It is psychological.

The End of the “Don’t Click Links” Era

For years, cybersecurity awareness revolved around a relatively simple set of instructions. Employees were told not to click suspicious links, avoid unknown attachments, and ignore poorly written scam emails.

Those precautions still matter.

But the WEF report argues that they are increasingly inadequate against AI-driven deception. (weforum.org)

Agentic fraud often arrives through trusted channels. It appears inside familiar email threads, WhatsApp groups, known phone numbers, scheduled video calls, or routine business communication. The attack succeeds not because the victim is careless, but because the interaction feels authentic.

That is forcing cybersecurity experts to rethink the idea of digital trust itself.

Increasingly, organisations are shifting from traditional awareness training toward what experts describe as “trust architecture” — systems designed to independently verify identity and authenticity.

This shift is subtle but important.

In the agentic AI era, cybersecurity cannot depend entirely on human intuition under pressure.

Verification processes must become institutional habits.

That may include confirming sensitive requests through separate communication channels, reducing the amount of publicly exposed organisational information, or introducing multi-layer approval systems for financial transactions.

Larger organisations are also beginning to deploy AI-driven defensive systems capable of identifying anomalies in communication behaviour, transaction patterns, urgency framing, and synthetic media indicators. Ironically, AI itself may become one of the strongest tools for defending against AI-enabled fraud.

But the problem remains deeply unequal.

Large corporations may afford sophisticated defensive infrastructure. Smaller enterprises often cannot.

That asymmetry is becoming one of the defining cybersecurity risks of the digital economy.

The Quantiq’s Assessment

The significance of the WEF Global Cybersecurity Outlook 2026 lies in one central warning: cybercrime is evolving from technical intrusion into industrialised deception.

AI is not merely automating fraud. It is personalising manipulation at unprecedented scale.

For Northeast India, the implications are immediate.

The region is entering a period where entrepreneurship, digital finance, AI adoption, e-governance, and online commerce are expanding simultaneously. That transformation creates opportunity, but it also creates exposure. Fraud systems powered by multilingual AI will inevitably target regions where digital adoption is growing faster than cybersecurity awareness and infrastructure.

This is not simply a technology story.

It is a story about institutional preparedness, economic resilience, and public trust.

Cybersecurity can no longer remain a niche IT concern discussed only inside large corporations. In the coming years, digital trust itself may become one of the most important foundations of economic stability.

That requires a different kind of public conversation — one that is accessible, locally grounded, and capable of reaching small enterprises, cooperatives, startups, educational institutions, and ordinary citizens.

Because in the age of agentic AI, the most dangerous weapon may no longer be malicious code.

It may be a believable conversation.https://thequantiq.com/the-rise-of-agentic-ai-and-indias-it-crossroads/